Is your content ready for AI search?

Search is changing faster than most marketing teams realise. Google's AI Overviews now appear in a significant share of search results, especially when it comes to longer and more specific queries. ChatGPT handles hundreds of millions of searches every week. Microsoft Copilot, Claude, and Perplexity are growing rapidly. And according to McKinsey research, half of consumers now intentionally seek out AI-powered search engines, with adoption spanning all age groups. For many of those users, an AI-generated answer is the first, and sometimes only, thing they see.

We've had a close-up view of this shift. Over the past year, we've been working with our colleagues on the Insytful team to build Insytful AI Search, an on-site AI search platform that reads and answers questions from an organisation's own published content. That work has taught us a lot about what makes content easy for AI to find, understand, and present accurately – and where most organisations have gaps they haven't yet considered. We've seen robots.txt files that inadvertently block AI crawlers, important information hidden inside accordion elements that AI can't access, and meta descriptions so generic that AI tools have no basis for deciding whether a page is worth citing.

To understand why these issues matter more than they used to, it helps to look at how search itself has changed.

The shift from rankings to citations

For years, the goal of SEO was clear: rank as high as possible on a search engine results page. You optimised for keywords, built backlinks, improved page speed, and climbed the rankings. Users scanned the results and clicked through to your site.

AI search works differently. When someone asks ChatGPT or Perplexity a question, they don't receive a list of links – they get a synthesised answer, drawn from multiple sources and delivered as a single, coherent response. If your content is cited, you're part of that answer. If it isn't, you may not feature at all.

Google's own AI features follow a similar pattern. AI Overviews summarise information at the top of the results page, often answering the user's question before they reach the traditional blue links. Even organisations that rank well organically can see their traffic decline if AI is answering the question without directing users to their site.

What this means in practice is that the metric that matters is shifting. It's no longer just about where you rank – it's increasingly about whether AI tools trust your content enough to cite it. This emerging discipline, sometimes called Generative Engine Optimization (GEO), is becoming as important as traditional SEO.

What AI looks for, and where most content falls short

AI search tools don't evaluate content in the same way traditional search engines do. They're looking for content that is clearly structured, easy to extract, factually accurate, and corroborated by other sources. That means some of the approaches that worked well for traditional SEO – keyword-dense copy, long-form content designed to keep users on the page, carefully constructed internal linking – aren't necessarily what gets your content cited by AI.

In our experience, there are several areas where organisations commonly fall short.

Content isn't structured for extraction

AI tools pull specific passages from your pages to use in their answers. If your content buries the key information halfway down the page, or relies on context from earlier sections to make sense, the AI may skip it entirely, or worse, misrepresent it. Content needs to be written so that individual sections can stand alone as clear, complete answers.

Technical access is being overlooked

A growing number of AI crawlers now visit websites alongside traditional search engine bots – but they need permission to access your content. Many organisations haven't updated their robots.txt files to account for these new crawlers, which means their content may be completely invisible to some AI platforms. There's also an important distinction between crawlers that feed AI training data and those that power real-time AI search results, and managing them requires a deliberate strategy rather than a blanket allow-or-block approach.

Trust signals are weak or inconsistent

AI models don't just look at your website in isolation. McKinsey found that a brand's own sites typically account for just 5 to 10 percent of the sources AI search references, with the rest coming from third-party publishers, review sites, affiliates, and user-generated content. If your brand information is inconsistent across these sources, that erodes the AI's confidence in citing you. Research has shown that brands mentioned consistently across multiple independent sources are significantly more likely to appear in AI-generated answers.

Metadata is treated as an afterthought

Page titles and meta descriptions have always mattered for SEO, but they take on a new role in AI search. AI models use meta descriptions as machine-readable summaries to help decide whether a page is worth citing. A vague or generic meta description doesn't just cost you clicks – it can cost you citations. When an AI tool is deciding which of several sources to reference in its answer, the meta description acts as a quick summary of what the page offers. If yours says something like "Learn more about our services" while a competitor's clearly states what the page covers and who it's for, the AI is more likely to treat the competitor's page as the better source.

Content isn't being kept up to date

For evergreen topics, AI tools will happily cite older content if it's accurate and authoritative. But for anything where the facts move, from industry statistics to product details to regulatory guidance, outdated information is a liability. AI models cross-reference sources, and if your page contains stale data that conflicts with more recent sources, it's less likely to be cited. Including clear publication and update dates can both help signal to AI and users that your content is actively maintained.

Why this matters more than you might think

It's tempting to view AI search as a niche concern, something that matters for tech-savvy early adopters but doesn't yet affect mainstream audiences. The data tells a different story. McKinsey estimates that unprepared brands could see traffic from traditional search channels decline by 20 to 50 per cent as decision-making shifts to AI platforms.

Google's AI Overviews appear across a wide range of everyday search queries, not just technical ones. ChatGPT has over 700 million weekly active users. Perplexity processes hundreds of millions of queries every month. And Microsoft is embedding Copilot across its entire product ecosystem, putting AI search in front of millions of enterprise users.

More importantly, the users who find your brand through AI search tend to be more valuable. They arrive with clearer intent, having already received a recommendation or citation from a source they trust. They're not browsing a list of options. They've been pointed to you specifically.

And this isn't limited to informational searches. Research by Semrush shows that AI Overviews are increasingly appearing for commercial and transactional queries – the searches closest to a purchasing decision. Even branded, navigational searches are now triggering AI-generated answers, meaning the impact extends well beyond top-of-funnel content.

The flip side is equally significant. If your competitors are being cited and you aren't, you're not just missing traffic. You're missing the endorsement that comes with being part of an AI-generated answer.

It's not about starting from scratch

The good news is that much of what makes content work for AI search overlaps with what makes it work for users. Clear writing, logical structure, accurate information, and good metadata have always been hallmarks of quality content. In most cases, there's no need to throwing out your existing strategy – you just need to refine it to account for how AI tools discover, evaluate, and present information.

But there are specific areas where AI search requires a different approach, from managing AI crawler access to writing content that's structured for extraction rather than just readability. And the landscape is evolving quickly. New AI platforms are emerging, crawlers are multiplying, and the standards for what gets cited are still being established.

The organisations that act now, while AI search optimisation is still a relatively uncrowded field, will have a meaningful head start. Those that wait for the dust to settle risk finding that their competitors have already secured the citations and visibility that become increasingly difficult to dislodge once established.

Don't let competitors claim the citations you're missing

Every time an AI tool answers a question using a competitor's content instead of yours, that's visibility, credibility, and potential business you've lost. And unlike a dip in search rankings, you probably won't notice it happening – because the traffic simply never arrives. Your pages may still rank well in traditional search while AI tools quietly direct potential visitors elsewhere.

The longer you wait to address this, the more ground competitors gain. As the Semrush research mentioned earlier shows, AI Overviews are now appearing for commercial and transactional searches, not just informational ones, so the business impact is only growing. And with only 16% of brands currently tracking their AI search performance, according to McKinsey, most organisations don't yet know where they stand.

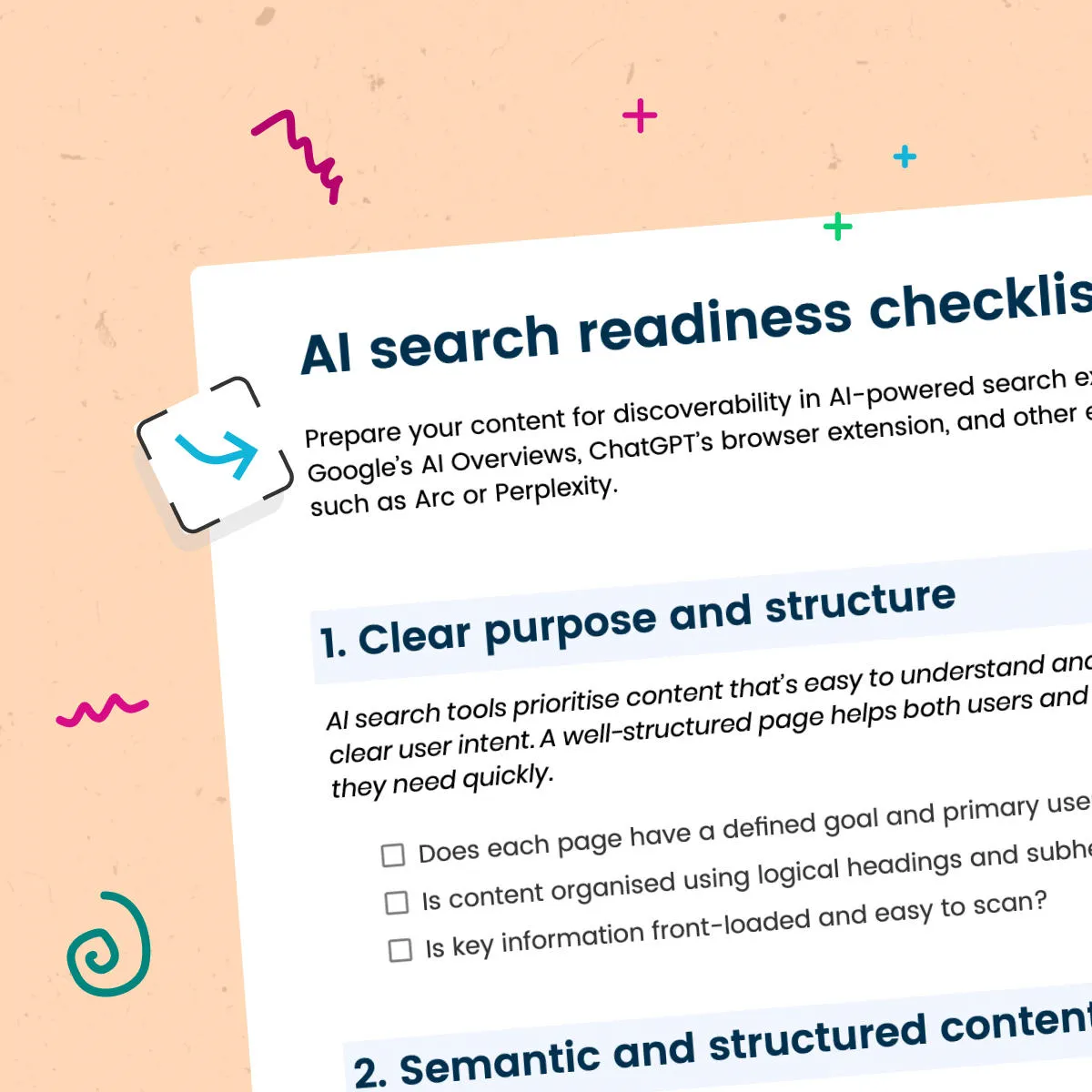

We've put together a comprehensive AI search readiness checklist covering 10 key areas, from content structure and metadata to AI crawler management, trust signals, and multi-platform visibility. It gives marketing and content teams a practical framework for auditing their content and closing the gaps before competitors fill them.